Summarize Content With:

Quick Summary

This case study on AI call center discusses a real-world scenario where a mid-sized e-commerce brand used contact center AI to revamp its customer support operations. The brand used conversational AI, Real-time Agent Assist (RTAA), post-call automation, and intelligent call routing to achieve a resolution time of 15 seconds per customer issue. It also reduced human calls by 41%.

The takeaway: To achieve call center automation success, it’s all about data quality, escalation criteria, and a structured approach to execution. Businesses can use a combination of NLP, Sentiment Analysis, and proactive outreach campaigns like cart abandonment to significantly enhance efficiency in Call Center operations.

Introduction

What does a successful AI Call Center case study look like in reality? Most discussions around Contact Center AI and Call Center Automation are still in theory. This blog is a departure from that norm.

We are going to take a look at a real-world case study where Conversational AI, Natural Language Processing, Sentiment Analysis, and Intelligent Call Routing were used to resolve operational issues in a Call Center environment. This case study on AI Call Center follows a structured approach to execution to achieve measurable Customer Experience (CX) Optimization.

What Does Winning AI Call Center Case Study Look Like?

We shall get to the architecture, the prompt engineering and the pivot stories later. But we should first present to you the numbers that count. This is a case study of an mid-end e-commerce brand AI call center implementation. With an average of 18,000 inbound support calls every month.

Their previous system of operation had broken their neck. With 48-hour ticket backlogs, 34 percent annual agent turnover, and a customer satisfaction (CSAT) rate that had dropped to 61 percent far under the industry average of 75 percent of the retail and e-commerce call centers ( Salesforce State of Service, 2024).

Our North Star Metric: Decrease the average handle time on tier-1 queries, below 30 seconds. The 90-day ending outcome of the implementation of Botphonic contact center AI stack. It resulted in 15 seconds average resolution time of the 20 percent most frequent tickets. Including decreased 41 percent call volume to human agents , and improved CSAT of about 84 percent.

The Tech Stack at a Glance:

- Conversational and intent classification Large Language Models (LLMs).

- Integration of CRM ( Salesforce ) to access customer real-time data.

- Transcription Voice-to-Text APIs (Google Speech-to-Text + Deepgram)

- Sentiment scoring NLP pipes.

- Automation on post-call tagging, summarization and CRM updates.

- Smart call routing engine that is driven by intent and history of a caller.

Learn more: AI Call Center Statistics & Insights for 2026

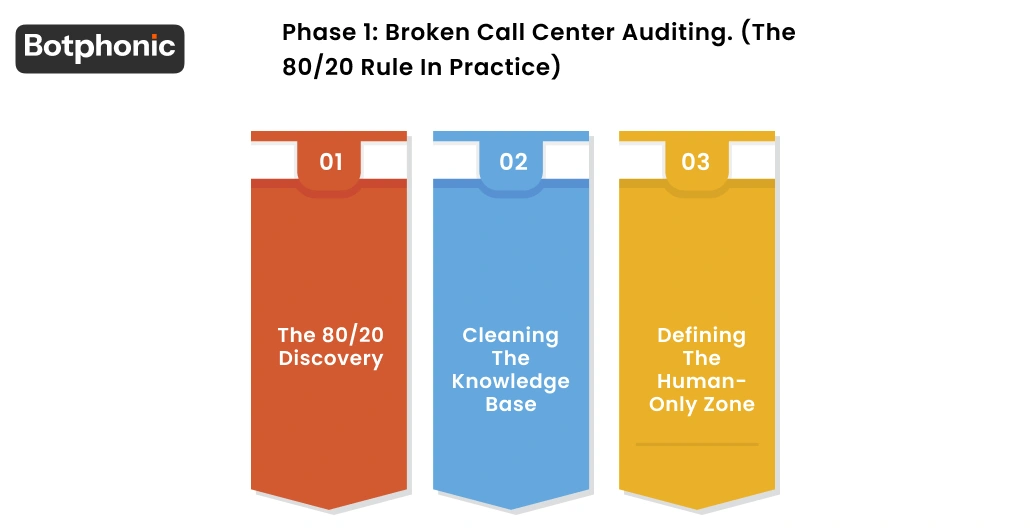

Phase 1: Broken Call Center Auditing. (The 80/20 Rule in Practice)

The biggest error that most companies commit when automating work of a call center is to go directly to the technology. We did the opposite. And took two weeks and did nothing but read. We pulled 90 days of call transcripts and ticket tags before we had the slightest idea about what was really moving volume.

1. The 80/20 Discovery

We had a typical Pareto distribution. Only 6 categories of tickets took 79 per cent of the entire inbound contact volumes. They are:

- Order status requests,

- Shipping delays,

- Returns starting

- Resetting passwords

- Failed promo codes,

- Checking product availability.

Order tracking chatbots were designed to manage these kind of queries. Even intelligent call routing services were programmed to deal with the same.

IBM research has estimated that the appropriate conversational AI infrastructure can help businesses automate as many as 80% of routine customer inquiries (IBM Institute for Business Value, 2023).

2. Cleaning the Knowledge Base

Years of internal documentation, product FAQs, shipping carrier SLAs and other documents that the client had painstakingly compiled were all locked up in 50+ page PDFs with inconsistent formatting. It is at this point that majority of AI implementations quietly collapse. The answers will be so only when the knowledge base is trashy.

We had a systematic chunking procedure. Wherein we had each document broken down into 300-500 tokens with clear metadata tags. Such as topic, last-updated date, source authority, and more. This was to make the content readable to our NLP retrieval layer and to be certain that the AI was referring to correct and up-to-date information.

3. Defining the Human-Only Zone

We had drawn on a hard line for the following scenarios that the AI was strictly not allowed to manage:

- Cancellation of subscriptions requests should be sent in writing to the address below, enclosed in an envelope, marking the day when the cancellation takes effect.

- Risks or references of legal action.

- Price disputes are high-value and above 500.

- Cases in which a caller had mentioned the words, lawsuit, attorney or BBB.

- Any discussion in which the sentiment analysis model identified the model as being in extreme distress.

Phase 2: The AI Brain: How was it Made?

The field of Logic Architecture and Prompt Engineering has always been a very competitive one.

We began constructing the engine of an AI receptionist. After cleaning up the data and the boundaries were figured out. Here, contact center AI transforms out of theory to operational reality.

Instructions are not the only technique to make a prompt engineer.

The system prompt, as we refer to them as the brain instructions, was developed to establish four thing:

- A brand voice (friendly, direct, never sarcastic)

- Limit on length of response per type of query

- Hard prohibitions (never speculate on delivery dates, never offer unauthorized discounts)

- Trigger of escalation

It took him more than 40 attempts to do this right. The ill-constructed system prompt will result in an AI that technically answers the question but does not conform to the brand expectations or undermines customer trust.

The Critical Logic Fork: Emotional vs. Transactional Intent

Our NLP classification layer categorized every incoming interaction into either one of two lanes before the conversational AI responded.

- Transactional Intent: A query such as where is my order? or how do I give up on this elicited a short, fast, data-pull response.

- Emotional Intent: Any caller uttering the words, “This is the third time this has happened to me” instantly changed the routing logic. It made it to be more empathetic, slower, and set up a warm handoff to a live agent.

This is the only crucial architectural choice in customer experience (CX) optimization.

Providing the AI with Hands: API Connectors

The intent to read is not all. The AI had to be able to act as well. We connected two-way API connections to Salesforce CRM, order management system (OMS) of the client and their shipping carrier to the tracking endpoint. It implied that the AI was able to retrieve a live order status, start a return label, or issue a pre-approved courtesy credit in the same dialogue, without a human operator ever pressing a keyboard button. In the case of cart abandonment proactive outreach, we also attached the AI to the email and SMS automation layer with the goal of executing personalized follow-up sequences depending on the result of the conversation.

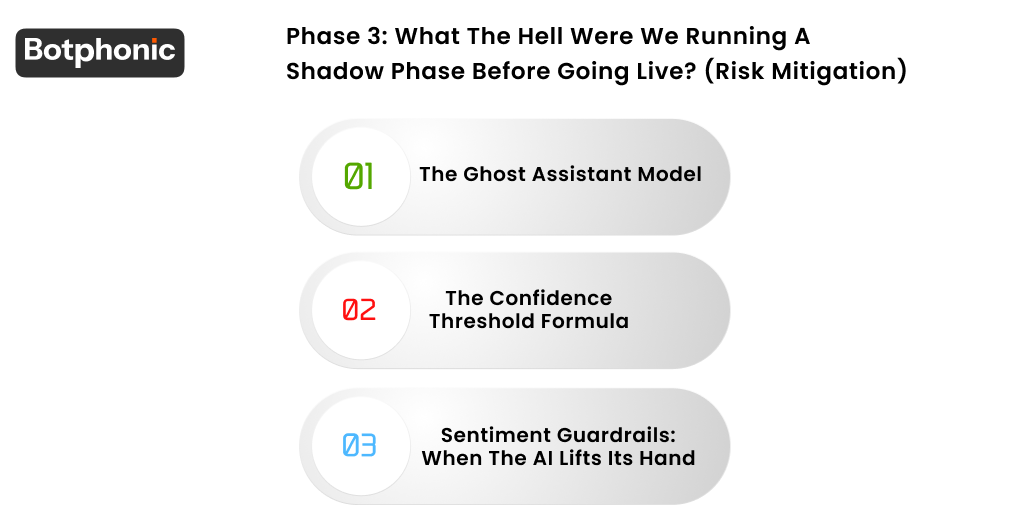

Phase 3: What the hell were we running a shadow phase before going live? (Risk Mitigation)

This is the stage that most vendors bypass. This is the reason why most AI deployments are terminated within 60 days of their release. Our 14-day Shadow Phase was the Ghost Assistant model.

1. The Ghost Assistant Model

At the Shadow Phase, the Real-time agent assist (RTAA) layer of Botphonic operated in parallel with all live agent conversations listening, transcribing, and typing implied replies in real time

AI recommended replies to the agents with two suggestive choices, i.e., approve and edit. The AI did not directly address the customer at this window. This provided us with 14 days of actual performance data in the field, failure trends and agent trust in the system prior to becoming fully autonomous on tier-1 queries.

2. The Confidence Threshold Formula

We had a no-fly rule: If the internal confidence score of the AI was less than 85. We ensured the system remained silent and transmitted the interaction to a human agent with no apparent break in the caller experience.

In the Shadow Phase, about 23 percent of interactions were below this threshold. It was implying that the AI acknowledged the uncertainty on its part and had to wait. That level of self-realization is what builds the distinction between a production level system and a demo.

3. Sentiment Guardrails: When the AI Lifts Its Hand.

The sentiment analysis model had been set-up to track tone in real-time. As soon as a caller’s language signalled frustration, the system detected it through word choice, speaking rate, and the rhythm of silence the automatic escalation signal was made.

This system would notify the immediate senior agent, and a summary of the context (caller name, issue, conversation history) would be ready before the transfer had been made. Automated quality assurance (Auto-QA) takes action when it counts, representing its most feasible form.

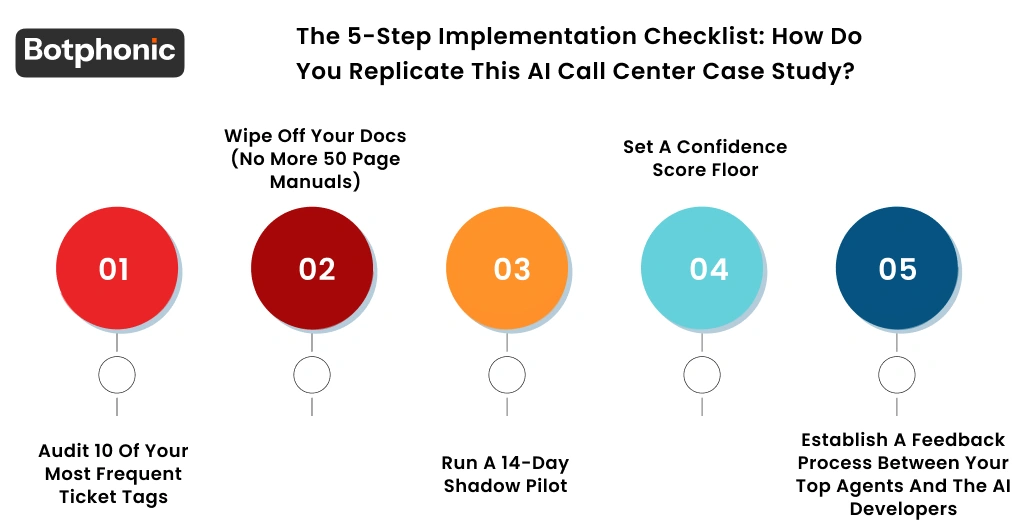

The 5-Step Implementation Checklist: How Do You Replicate This AI Call Center Case Study?

When assessing the automation of the call center in your own organization, then this is the very structure that we have at Botphonic. The absence of any of these steps is a primer to a failed deployment.

1: Audit 10 Of Your Most Frequent Ticket Tags.

Retrieve 90 days of ticket data and prioritize your contact drivers by volume. Clean tag your data first before you touch any AI tooling. Instead, what you cannot measure you cannot automate.

2: Wipe off Your Docs (No More 50 page manuals)

Your AI is as precise as your source of knowledge. All the policy documents. Including frequently asked questions, and product guides must be broken down into small manageable bits with uniform style. In case your human agents are’t able to find solution so as your AI won’t be able to.

3: Run a 14-Day Shadow Pilot

Introduce autonomous AI to live customer conversations without a shadow stage of Real-time Agent Assist. Failure modes that are not evident in a sandbox environment will appear after two weeks of running in parallel. Treat this data as gold.

4: Set a Confidence Score Floor

Before you let the system handle a single live call independently, identify your minimum acceptable confidence level. The correct floor is 80-85% in most cases of deployments. Any less should forward to a human agent where there is a full context handoff.

5: Establish a Feedback Process between Your Top Agents and the AI Developers.

Your higher agents are aware of where the AI fails before the data fails. Implement a weekly review system. In which you can see the best performers mark conversations that have been poorly handled, wrong responses, and tone differences. This is the fuel of the constant improvement of the model.

Does AI Become the New Norm at Contact Centers?

This case study of AI call center is not a rare exception. By 2026, Gartner has stated that conversational AI is going to be utilized in more than 80 percent of enterprise contact centers. The difference between those companies who have implemented contact center AI in their CX processes and those that continue to have fully manual queues will determine what brand customers will remain loyal to and leave.

What this case study demonstrates is that the technology. That is intelligent call routing, NLP-based intent classification, sentiment analysis, including post-call automation.Moreover, real-time agent assist is fully developed enough to be put in production. Process-based variables are the implementation variables that make the difference between success and failure. The quality of your data audit, the thoroughness of the definition of your human-only zone, and the strictness of your shadow run before going live.

AI ceases to be a competitive edge in customer service. It is the expectation of the baseline.Companies that empower their agents instead of replacing them will win. Companies who implement in a well thought out manner, utilizing clean data, having established guardrails and became truly scalable.

Conclusion

The takeaway from this AI contact center case study: success is not achieved by simply connecting contact center AI and expecting things to magically work out. It’s achieved through discipline: data, guardrails, and a structured approach. Whileit’s true that technologies like conversational AI, natural language processing (NLP), sentiment analysis, and intelligent call routing are already advanced in an AI call assistant. It’s not the technologies that set the winners apart; it’s the execution.

Call Center Automation done well doesn’t replace people; it empowers people. Automated simple inquiries in real-time, freeing people to focus on more meaningful conversations and proactive outreach like cart abandonment campaigns. This is true Customer Experience (CX) Optimization.

The bottom line: AI in contact centers is not a nice-to-have; it’s a necessity. It’s a business infrastructure. The businesses that get it right today will set the bar for customer experience in the next decade.

Try Botphonic and get smart results

Contact Botphonic